HMANet: Hybrid Multi-Axis Aggregation Network

[CVPRW 2024] 针对图像超分辨率任务提出的混合多轴聚合网络,在 Urban100 等多个基准数据集上取得 SOTA 性能。

Core Innovations

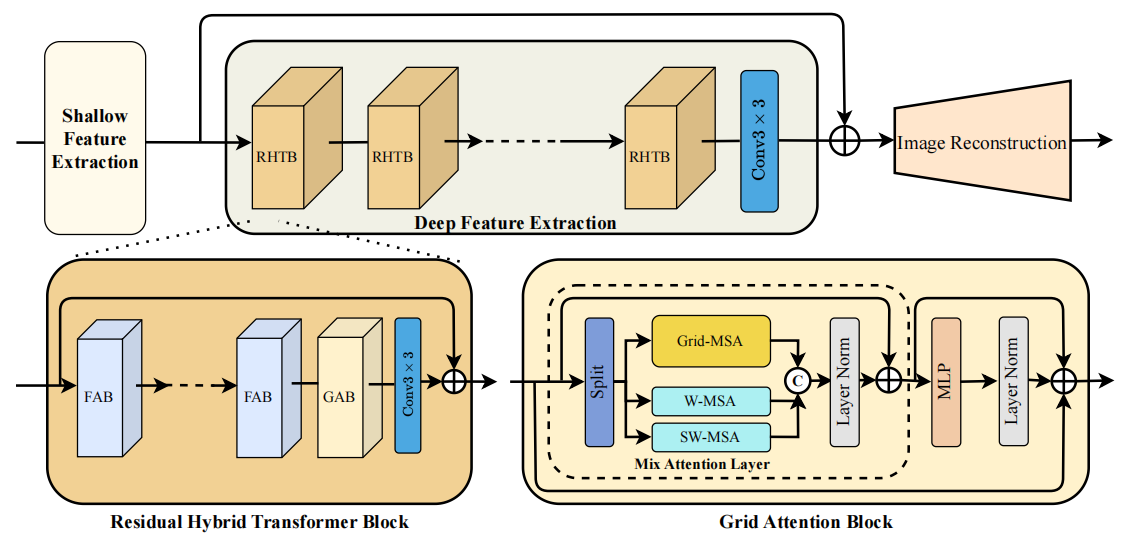

现有的 Transformer 方法(如 SwinIR)通常将自注意力计算限制在不重叠的窗口中,导致感受野受限。为了解决这个问题,HMA 引入了两种核心机制来捕获长距离依赖 [cite: 59, 65]:

Grid Attention Block (GAB)

通过网格划分策略(Grid Shuffle)打破局部窗口限制,实现跨区域的信息交互,显著扩大了模型的有效感受野 [cite: 127]。

Residual Hybrid Transformer

(RHTB) 结合了通道注意力(Channel Attention)与自注意力机制,在增强非局部特征融合的同时保持了计算效率 [cite: 154]。

Figure 1. The overall architecture of HMANet[cite: 174].

Performance Highlights

在纹理复杂的 Urban100 数据集上,HMA 相比 SwinIR 实现了高达 1.43dB 的性能提升 。

在 x2, x3, x4 等多个缩放尺度上,HMA 均全面超越了 SwinIR、HAT 等现有 SOTA 方法 [cite: 305]。

得益于 GAB 模块,模型能更好地恢复图像的边缘与纹理细节,减少了模糊伪影 [cite: 312]。

Quick Start

1. Installation

# 1. Clone the repository

git clone https://github.com/korouuuuu/HMA.git

cd HMA

# 2. Install dependencies

pip install -r requirements.txt

# 3. Install the package

python setup.py develop2. Evaluation (Test)

Download the pretrained models from Google Drive (link in GitHub repo) and place them in the correct folder.

# Test on SR x2 (Example)

python hma/test.py -opt options/test/HMA_SRx2.yml

# The results will be saved in ./results/Citation

@InProceedings{Chu_2024_CVPR,

author = {Chu, Shu-Chuan and Dou, Zhi-Chao and Pan, Jeng-Shyang and Weng, Shaowei and Li, Junbao},

title = {HMANet: Hybrid Multi-Axis Aggregation Network for Image Super-Resolution},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) Workshops},

month = {June},

year = {2024},

pages = {6386-6395}

}